A simple, minimal XGBoost regression adapted from various tutorials by @julia :

library(tidymodels)

library(tidypredict)

tidymodels_prefer()

#Initial split, generate training and testing

mysplit <- initial_split(iris %>% select(-Species), strata=Petal.Width)

training_set <- training(mysplit)

test_set <- testing(mysplit)

#Set up the model specification

#The hyperparameters will be tuned

xgb_spec <- boost_tree(

trees = 1000,

tree_depth = tune(),

min_n = tune(),

loss_reduction = tune(),

sample_size = tune(),

mtry = tune(),

learn_rate = tune()

) %>%

set_engine("xgboost") %>%

set_mode("regression")

#Set up a space-filling grid design to cover the hyperparameter space as well as possible

xgb_grid <- grid_latin_hypercube(

tree_depth(),

min_n(),

loss_reduction(),

sample_size = sample_prop(),

finalize(mtry(), training_set), #gets treated differently b/c it depends on actual # of predictors in data

learn_rate(),

size = 30

)

#Put the model specification into a workflow

xgb_wf <- workflow() %>%

add_formula(Petal.Width ~.) %>%

add_model(xgb_spec)

#Create cross-validation resamples for tuning the model

input_folds <- vfold_cv(training_set, strata=Petal.Width)

#Use tunable workflow to tune

doParallel::registerDoParallel()

xgb_res <- tune_grid(

xgb_wf,

resamples = input_folds,

grid = xgb_grid,

control = control_grid(save_pred = TRUE)

)

#Select the best parameters based on RMSE

best_rmse <- select_best(xgb_res, "rmse")

#Finalize the tuneable workflow using the best parameters

final_xgb <- finalize_workflow(

xgb_wf,

best_rmse

)

#############

#Fit the final best model to training set and evaluate the test set

final_res <- last_fit(final_xgb, mysplit)

#############

#Get the model-predicted values of the test set

pred_df <-

final_res %>%

collect_predictions() %>%

as.data.frame()

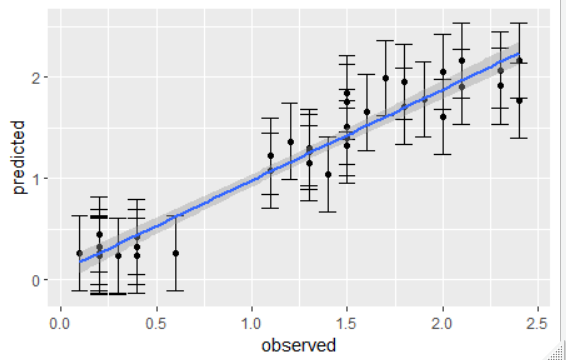

How can I obtain prediction intervals for the predictions? I want to get an idea for the uncertainty associated with each prediction, as in 95% prediction intervals.

I know that collect_metrics() will give me error estimates, but that is a general idea of model performance.

Prediction intervals have been previously discussed here with some interesting comments from @max and ultimately a fantastic blog post from @brshallo .

However - it's not clear if a Boostrap approach to prediction intervals could work for XGBoost regression, like here in my tuned model. Could I apply it such that I don't have to re-do the prediction and double the computation time? If so, how could I adapt the above code to produce the prediction intervals?

Thanks!