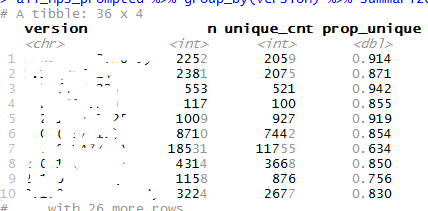

It is a new feature in the current release of the tibble package that highlights the significant digits and greys out the rest of the numbers. It is a part of the new color features for the package. There has been a lot of reaction to the newest release of tibble and as far as I can tell, there will be another release soon to change/fix a lot of the things that people have disliked. I am not sure whether this feature will remain or not.

What's the rationale behind deciding what is and isn't significant? (I'll be satisfied with a link to whatever justification there is).

From the look of it, it appears to be assuming that fewest number of digits in a column is the number of significant digits. That seems like a sketchy assumption to me, so I hope I'm wrong.

It highlights the first three digits (i.e. the digits that represent > 99.9% of the value of the number)

Is there a theoretical or conceptual justification for that, or is it arbitrary?

I ask only because this behavior doesn't correspond to my understanding of significant digits being a way to express uncertainty of measurement.

I feel exactly the same think also that this looks arbitrary and opened a corresponding issue

I feel like highlighting just the values before the first comma is arbitrary as well. If we adopt a definition of significant figures based on measurement error, such as

The number of significant figures in a measured quantity is the number of digits that

are known accurately, plus one that is in doubt. (Rounding and Significant Figures, Laboratory Analytical Procedure, National Renewable Energy Laboratory, 10.1.2; https://www.nrel.gov/docs/gen/fy08/42626.pdf)

it is reasonable to claim that significant figures are determined on the basis of the accuracy of measurement, and not based on the appearance of the result.

As an example, suppose we have an instrument, say a 1 meter ruler, that is accurate to the centimeter. Standard laboratory practice is that I should be able to read up to the millimeter. If I use that ruler to measure two lengths of string, and get the results 7.4 cm, and 93.2 cm. The first measurement as two significant digits, and the second has 3. And then to extend that, the sum of those two values, 100.6, has four significant digits. In all of those cases, the number of significant digits is determined by how many digits are between the first non-zero digit and the first uncertain digit.

It gets a little more complicated when you move into multiplication (and a lot more complicated when using logarithmic functions), but the principle still applies that as long as you know the limit of precision of the measuring instrument, you can trace the appropriate significant figures through all of the calculations. Trying to guess the appropriate significant figures from the values, however, is risky business.

Additionally, significant digits are only relevant to measured values. In the initial posting, the columns appear to be counts/integers. Count data isn't subject to imprecision, and so, strictly speaking, significant figures don't apply. The proportion of two integers is also not subject to significant figures; since the integers have infinite precision, the proportion does as well (at least as far as the machine tolerance).

Which brings me back to my question: Is there a theoretical or conceptual justification for the illustrated behavior; preferably something published. If there is, then the behavior may be appropriate. If there isn't, I worry that this behavior will contribute only more confusion to the topic of significant figures.

Sorry, I wrongly interpreted your intention and edited my post. And you are right that my suggestion is also somewhat arbitrary.

I think it is a good start to clarify here a bit. However, any way to highlight digits will somehow be arbitrary. So if one does it, it should have some well thought motivation behind it. And of course a theoretical foundation might help. On the other hand even if there is a deep theory behind it that covers all points that you discussed, it will still feel strange from a practical point of view, when R is behaving somehow different to all other analytical frontends/tools/languages.

My alternative suggestion is just pragmatically (wihtout a deep theory) and the reasoning is that it might be cognitively easier to read the number, when highlighting the first digits before a comma. So this would at least be very transparent without knowing a specific theory. Also the output would appear cleaner to me. Of course there would also be issues to take into account like scientific representation and so on...

I think this is what @hadley is pointing out.

Notice that, by example, the result of just specifying just the top three digits produces a result that is always 99% accurate. Although choosing a threshold of 99% is arbitrary in general most would consider it good enough.

# say the actual number is 10099

# but you say it is about 100e2

# Some one "reconstructs the number as

100e2

#> [1] 10000

# the error is

e <- (10099 - 100e2) / 10099

e

#> [1] 0.009802951

# accuracy

1-e

#> [1] 0.990197

#

#say the actual number is 1009999

# but you say it is about 100e3

# Some one "reconstructs the number as

100999

#> [1] 100999

#the error is

e <- (100999 - 100e3) / 100999

e

#> [1] 0.009891187

#accuracy

1 - e

#> [1] 0.9901088

#

#say the actual number is 1009999

# but you say it is about 100e4

# Some one "reconstructs the number as

100999

#> [1] 100999

#the percent of error is

e <- (1009999 - 100e4) / 1009999

e

#> [1] 0.00990001

# accuracy

1-e

#> [1] 0.9901

I understand the mathematics behind the approach. I just question the rationale behind choosing significant digits this way. To put a loaded term on it, I have reservations about its scientific validity. Significant digits are intended to accurately reflect the value of a measurement, not to accurately reflect the value of a number. Arbitrarily choosing significant digits without the context of the instrument of measurement comes with a lot of perils and I worry that it will mislead people that don't have a firm grasp of significant digits.

On that note, if we weren't calling these "significant digits," I probably wouldn't care. Significant digits is a widely accepted and recognized concept in the sciences, and I don't see the relationship between this implementation and the broader consensus.

Are we calling these "significant digits"?

@tbradley in an earlier comment called them "significant digits". @hadley just called them the "first three digits" and alluded to their accuracy as being constant.

And that may be the source of my confusion. No one has yet corrected the use of "significant digits." If that is not what they are to be called, then I will sheepishly excuse myself from the conversation. (how embarrassing)

I thought that was what they had been referred to but I could definitely be wrong, sorry for any confusion that might have caused.

I'm not trying to chastise anyone (no need to sheepishly skulk away, @nutterb), I just didn't think that's what they were being called. I could also be wrong (wouldn't be the first time), I was just surprised to hear that.

I'll have you know that skulking has been my standard ambulatory practice for thirty years

As a follow-up, I went and looked through some of the issues, and it appears that "significant figures" is the term being used for this behavior. Most notably, the option pillar.sigfig that controls this behavior.

Hi all,

although I am far from an expert on this topic (just remembering what I was taught in physics lab), I think that @nutterb makes a good point, here. Also in the newest post regarding tibble 1.4.2 there is explicit mention to "significant digits":

pillar.sigfig: The number of significant digits that will be printed and highlighted, default: 3. (Set the pillar.subtle option to FALSE to turn off highlighting of significant digits.) See below for an example ...

So, for example, we get:

tibble(val = c(0.01, 1, 100))

A tibble: 3 x 1

val

dbl

1 0.0100

2 1.00

3 100

and:

options(pillar.sigfig = 5)

tibble(val = c(0.01, 1, 100))

A tibble: 3 x 1

val

1 0.010000

2 1.0000

3 100.0

, which by the "theory" of significant digits (e.g., Significant Digits has a nice explanation, but many other sites address this) does not appear to be correct .

I mean: If a value has been recorded as "0.01", then it has always just one significant digit and adding some trailing zeroes, highligting them and referring to them as "significant digits" seems incorrect and can be misleading (for example, if that was a measurement in meters, showing and highlighting five zeroes as in the second case would suggest that the measurement was "certain" up to the nanometer (@nutterb: please correct me if I am wrong!). The same goes for "100", which by the same definition of significant digits has actually only one significant digit (I know... this is confusing... ![]() ).

).

Indeed, what appears to be highlighted by tibble seem to be (did not test this thoroughly) the first XX digits starting from the first nonzero (significant) one , and trailing zeroes are added if necessary (though also that does not apply constantly, because for example 100 should then become 100.00 - the dot seems to be treated as a digit in some cases).

I therefore agree with @nutterb that it would be probably better not to refer tibble's behaviour as being related to "significant digits". I know this may seem just a "terminology" issue. But we all know that terminology in science is, well, ... important ! ![]() .

.

Hope this makes sense and that it helps !

Thanks for this feedback, I agree that words are super important, especially since we never know when in the learning process a given individual learns something (i.e. it could feasibly be someone's first time encountering the words _significant digits/figures— especially if English isn't their first language). Definitely on the discussion block.