Hi all,

We're looking for some advice on how set shinyapps.io to handle a large app. We have a large app (xxxlarge) and the "professional" level shinyapp.io plan.

Problem

When we have 6-10 users hitting the app simultaneously, users start getting kicked off and having to reload.

The reloading is a huge issue because loading up the data for the app requires almost a minute when loading from IO when there is only one user (load time is 20 seconds running locally on a 2018 macbook pro -six core). With multiple users the load time can spike to several minutes.

There is also a lag when displays are being generated on IO.

When running locally, most of the plots generate with very little lag. On the server, the lag is just noticeable with only one user, but can become very slow (5-10 seconds) with 7-10 users. When 6-7 users hit displays that are processing-intensive, some of the users get kicked off and have to reload.

Optimization efforts to date:

Data is loaded into the global environment and a conditional only allows data loading if a data-load-initiated-flag has not already been triggered. Once in the app, most of the analytics are live because of the large number of options for grouping/filtering the data.

shinyapps.io Settings:

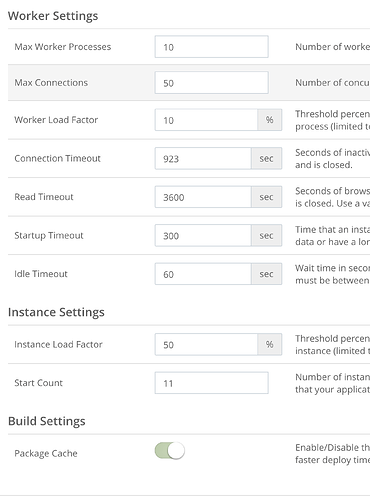

Our current settings are shown below. The app is xxxlarge with multiple files ranging from 13m to 1.5m rows (the largest are loaded as fst files smaller ones are rds). We are near the maximum upload size for IO.

Is there a way to set the server to eliminate (or at least reduce) the number of times the server kicks people off?

It would be really great to know why this is happening. Is there a way to see why the server kicked people off? It seemed that the server would kick some people off and would leave others on. I'm not sure what I'm looking for in the logs. I don't see any indication of when the users were kicked off, so it's hard to troubleshoot.

Any thoughts or advice would be greatly appreciated.

Steve