Hi all,

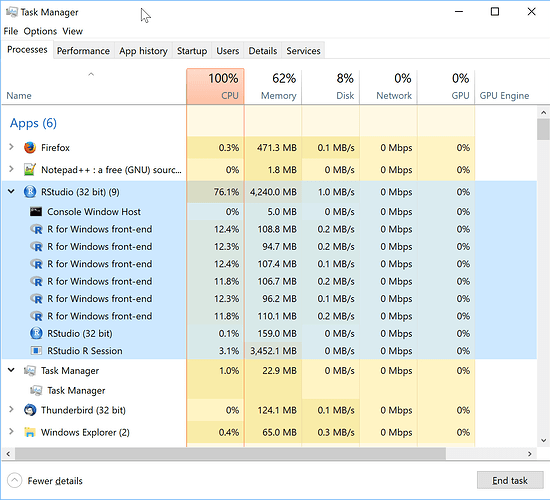

I have rstudio running some foreach loops, and when the memory footprint of rstudio approaches 4GB, the child processes bomb out. Everything runs fine when running serially and remaining below 4GB, as well as running parallel with a reduced dataset.

It's running 64-bit R, but rstudio is listed as a 32-bit application in the task manager, which makes me suspect the 4GB ceiling is to blame. (troubleshooting the crash is tough, hence my circumstantial sleuthing here).

I haven't seen a 64-bit rstudio for windows anywhere... any other thoughts on how to get around the issue aside from changing my code around to avoid the memory limit? Any help would be greatly appreciated.

The error I specifically get is the following (doesn't really help, but included here for the curious):

Error in unserialize(socklist[[n]]) : error reading from connection

Thanks,

Allie