Hello!

I need to create a dataset encoded with autoenceders.

I have installed and loaded the following libraries:

library("rminer")

library("keras")

library("tensorflow")

library("reticulate")

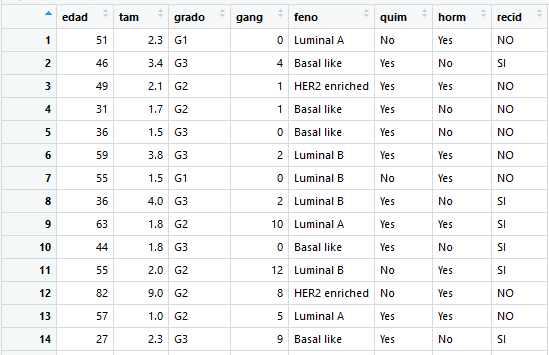

I have divided my data set into two parts, training and test, with the holdout method:Datos is my dataset

I get the training and test data, removing the column that is the data that you want to predict:

x_train <- subset(Datos[Division$tr,], select = -recid)

x_test <- subset(Datos[Division$ts,], select = -recid)

Then I convert the data set to matrix (I do not know if this is necessary)

x_train_matrix = data.matrix(x_train)

x_test_matrix = data.matrix(x_test)

At this moment, what I want to do is the following:

Define a coding model, with an input layer and the coding layer.

Extract the encoded data, for later take this reduction of characteristics to use them in other training models

original_dim <- 7L #334L #32L

Am I on the right path?

I need help to achieve the goal I have tried to explain, I hope you help me.

Am I correct that what you intend to do is in the line of what @max describes in his book

https://bookdown.org/max/FES/engineering-numeric-predictors.html#autoencoders

?

that is - some kind of semi-supervised feature extraction to use on structurally identical datasets?

In general, a good introduction to how autoencoders work (with code in Python, but it should look pretty similar in R) is

https://blog.keras.io/building-autoencoders-in-keras.html

(In your case, probably the first two models - simple and sparse autoencoder) would apply.)

Regarding the code snippets, some comments

You will want to have a "bottleneck layer" "in the middle" and then an output layer of the same dimensionality as the input

The categorical data needs to be one-hot encoded

With a mix of categorical and continuous variables, it can be difficult to find an adequate loss function - mean squared error will probably work best

For better performance, standardize the numerical variables

Hope this gets you started

Thanks, I'm trying to do the following, but I do not know if I'm right

# read de dataset

Datos <- read.table(file="datos_campus_virtual.txt",header=TRUE)

# conver to categorical data

for(unique_value in unique(Datos$feno)){

Datos[paste("feno", unique_value, sep = ".")] <- ifelse(Datos$feno == unique_value, 1, 0)

}

for(unique_value in unique(Datos$grado)){

Datos[paste("grado", unique_value, sep = ".")] <- ifelse(Datos$grado == unique_value, 1, 0)

}

Datos$quim <- ifelse(Datos$quim=="No",0,1)

Datos$horm <- ifelse(Datos$horm=="No",0,1)

Datos$recid <- ifelse(Datos$recid=="No",0,1)

# Two set: training (334) y test (166) with holdout

Division <- holdout(y=Datos$recid)

# prepara de data training and test

train <- Datos[Division$tr,]

x_train <- subset.data.frame(train,select = -grado)

x_train <- subset.data.frame(x_train,select = -feno)

test <- Datos[Division$ts,]

x_test <- subset.data.frame(test,select = -grado)

x_test <- subset.data.frame(x_test,select = -feno)

x_train_matrix = data.matrix(x_train)

x_test_matrix = data.matrix(x_test)

# Autoencoder and encoder

original_dim <- 15 #7L

encoding_dim <- 7 #4L

latent_dim <-15 #7L

# **********************

# Model definition

# **********************

# this is our input placeholder

input <- layer_input(shape = c(original_dim))

# "encoded" is the encoded representation of the input

encoded<- layer_dense(input,encoding_dim , activation = "relu")

#decode layer

decoded<- layer_dense(encoded, latent_dim , activation = "sigmoid")

model_enconded <- keras_model(input, encoded)

model_autoencoder <- keras_model(input, decoded)

# **********************

# Compile

# **********************

model_autoencoder %>% compile(optimizer = 'adadelta', loss = 'binary_crossentropy')

# **********************

# training

# **********************

history <- model_autoencoder %>% fit(x_train_matrix, x_train_matrix,epochs=50,batch_size=256)

#predict

feature <- predict(object = model_enconded,x = x_test_matrix)

# Save de new data

write.csv(feature, file = "dataencoded.csv",row.names = FALSE)

Max

July 18, 2018, 7:38pm

4

There's a better workflow example given as an example here . I would avoid using data.table if you are going to put the data into a model; most model functions don't work with them based on how they store the data.

Also, having a small reproducible example is the main way for people to check/verify your code. Otherwise, it's all guesswork on our part.